Pyramids, ladders, and traveling theories

Argument pyramids

In previous blogs, I argued that theories of change are really about a process of reaching a shared understanding, systematically examining your assumptions and quality of evidence underpinning the judgements you make.

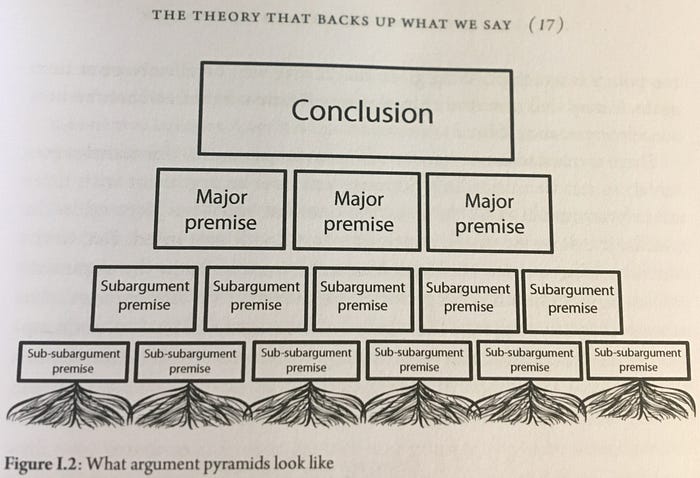

Theories of change are models for that shared understanding. All models are wrong, but some are better than others. Nancy Cartwright and Jeremy Hardie’s (2012: 15, 18) seminal book Evidence Based Policy provides plenty of practical guidance on the good and bad of models, but one part that always stood out for me was their emphasis on the need for a “warrant” to justify a decision, an argument, or a particular claim being true — “my argument for buying this wine is that it is cheap.” This is one such example of marshalling reasons to justify your argument or claim. These can be represented as a set of propositions (or premises) and a conclusion. Evidence is there to help provide these warrants and justify your confidence that the claim is true. Cartwright and Hardie represent this as a form of argument pyramid, as you can see below in my amateurish photo:

The trick here is seeing how much support you may need to hold up your argument. They take the case of a murder to illustrate (as I did for Process Tracing), noting that you may need various premises to make a case (access, motivation, capacity, etc.), but you stop when you have enough evidence, or enough good quality evidence (thinking like a Bayesian) to make the case compelling. Yet, as the pyramid suggests, we’re often working at different levels, from smaller to larger premises and from smaller to larger claims. You have levels of theory.

Ladders of abstraction

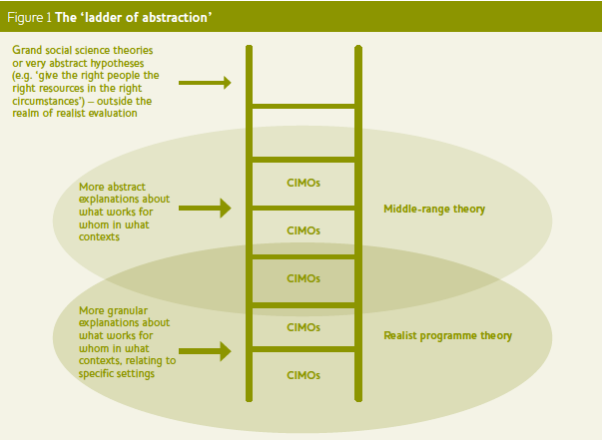

These different levels of theory also reflect different levels of abstraction. As Mel Punton et al. (2020) recently noted, theories of change don’t often hypothesise what goes on underneath the arrows that link outputs, outcomes, and impacts. They’re commonly too abstract and insufficiently evaluable.

As I mentioned previously, a number of different methods can be combined at the micro level. Realist Evaluation’s understanding of mechanisms as resources and reasoning can be embedded within a “systems” form of Process Tracing. A COM-B model theory of change can help bolster Contribution Analysis and provides the core of the Actor-based Change (ABC) framework which views behaviour change in a similar light.

However, Realist Evaluation (RE) is also geared toward generating middle-range theory. It aims to stand on the “shoulders of giants,” building on existing theories (see also Pawson, 2013). RE focuses on individuals actors in specific settings and also how groups of actors tend to act in some settings. Punton et al. (2020) find Sartori’s ladder of abstraction metaphor useful to communicate the way in which we can provide different types and levels of explanation. See their representation of this below:

Realists are not alone, of course. There are a variety of “candidate” middle-range theories we can draw on to explain parts of either operational, overview, or narrative theories of change. One of the best explanations of such templates is Sarah Stachowiak’s (2013) 10 Theories to Inform Advocacy and Policy Change Efforts. This includes global theories such as “punctuated equilibrium,” “policy windows,” “coalitions,” and tactical theories such as”framing” or “diffusion.” You’d be surprised how often you use these theories without even realising it.

Nested theories of change

Ladders of abstraction also speak to how we build theory and what we’re trying to communicate through that theory. How “major” are your premises? How much support might they need?

John Mayne (2015; 2018: 2 -3), in particular, has questioned just how much detail should we include in a theory of change? He suggests we actually require different versions of theory of change for an intervention. Mayne helpfully suggests three:

- Narrative — elevator pitch — theory of change which describes in very general terms how the intervention is intended to work. This can be a general if… then, because statement of the key pathways of the intervention and its intended results.

- Overview — big picture — theory of change which provides a simplified model showing the main pathways to impact (including propositions) and any rationale assumptions (or paradigmatic and normative assumptions).

- Operational — causal — theory of change which unpacks key steps in impact pathways and articulates causal links (necessary events and conditions), as well as causal assumptions and implementation assumptions which apply to parts of the ToC but not the ToC as a whole (as in an overview theory).

These nested theories of change can be broken down into smaller parts (link-by-link, pathway-by-pathway) which provide support for the overall theory. You can zoom in on key parts; processes, events, decisions. See Istvan Banyai’s extraordinary “Zoom” video to get a sense of how much we can actually zoom in (or out).

We often don’t know quite how granular to be or how many steps to have. A recent process tracing evaluation of social accountability efforts in a conditional cash transfer (CCT) programme in the Dominican Republic, for instance, appears operational:

However, the above actually represents broad stages, rather than the specific steps. The above evaluation uses the same definition of a mechanism employed in Contribution Tracing, but consolidated the steps. In some respects, the above is more useful for policy-makers (as it’s more synthesised), but it’s also possible key steps and data were missing. I did a review of the programme a few years before (which Raimondo cited), and I would have broken the parts down further. However, there’s always a trade off in the real world for where and how much to zoom in.

Usefully transferable theory

The ineluctable urge in evaluation, by both the tide of Randomised Control Trials (RCTs) and theory-based methods like Contribution Tracing, Contribution Analysis, and the Qualitative Impact Assessment Protocol (QuIP) has been to seek the most granular applications and explanations. While the most granular applications can be more rigorous, they can also be more context-sensitive. They can run the risk of leaving policymakers with a big “so what?” question, because they’re not just looking for what worked here, yesterday; they’re looking for what will work over there tomorrow.

So, the question is how well do the most granular explanations in evaluation and their sub-sub-argument premises travel?

Realist Evaluation’s emphasis on the use of heuristic theory templates in literature review can help theory become more portable. But Nancy Cartwright has recently gone a little further in her 10 steps towards the construction of middle-level theory. And I think this has some significant advantages over current efforts in Realist Evaluation.

Cartwright’s middle-level “causal principles” are one way to help span the general and particular. These include things such as ‘people respond to incentives,’ or slightly more granular: ‘people offered Conditional Cash Transfers (CCTs) to do something tend to do it.’ So, they’re about what people tend to do, but won’t always do. As realists would be keen to point out, different people will respond to different incentives in different contexts.

Cartwright proposes a causal-process-tracing theory of change (pToC) which sits somewhere in-between the operational and overview theory of change mentioned above. Beyond the causal principles, what stood out to me are the proposal to explicitly include positive and negative “moderators.” Positive moderators are called “support factors.” So, one could argue this resembles the kind of context-mechanism interactions we ought to elicit in Realist Evaluation (but also in Process Tracing).

Using the same example, one such moderator is the “credible threat of enforcement.” In practice, governments don’t always enforce conditions or sanctions in CCT programmes. In Latin America (the spiritual home of CCTs) credible threat, enforcement of conditions, and imposing of formal sanctions on recipients is rare (see here for an exception).

This moderator also applies to social accountability. Much of social accountability theory is built around the assumption that this moderator plays a crucial role in achieving service delivery impact (e.g. children’s test scores, maternal mortality rates). However, it’s actually a condition which is quite commonly absent. And as there are various examples where impact has been achieved absent this moderating condition, it’s therefore clearly not a moderator across all contexts. This therefore tells us there are limits to how theories which include this condition will travel.

The reverse of a support factor is called a “derailer.” These are things that get in the way. If credible threat of enforcement were a moderator, then perhaps a recent history of reprisals against civil society actors who complain, protest, or litigate might be derailers. In this case, so-called “safeguards” could be put in place to prevent derailers from getting in the way (anonymous Grievance Redress Mechanisms — GRMs, for instance). Such safeguards could help limit externalities which might come from attempted litigation, for instance.

However, this exercise of teasing out moderators for social accountability suggests that not only would one need to limit externalities which might come from pursuing sanctions (balancing feedback), you’d also need self-reinforcing feedback in the opposite direction. Something (or someone) needs to reverse the process. And it’s entirely possible in some contexts that self-reinforcing feedback towards system change might happen with different (positive and negative) moderators entirely.

For me, the moderator angle is helpful because it allows us to raise deeper questions about how context and mechanisms are supposed to interact in a refreshing new way.